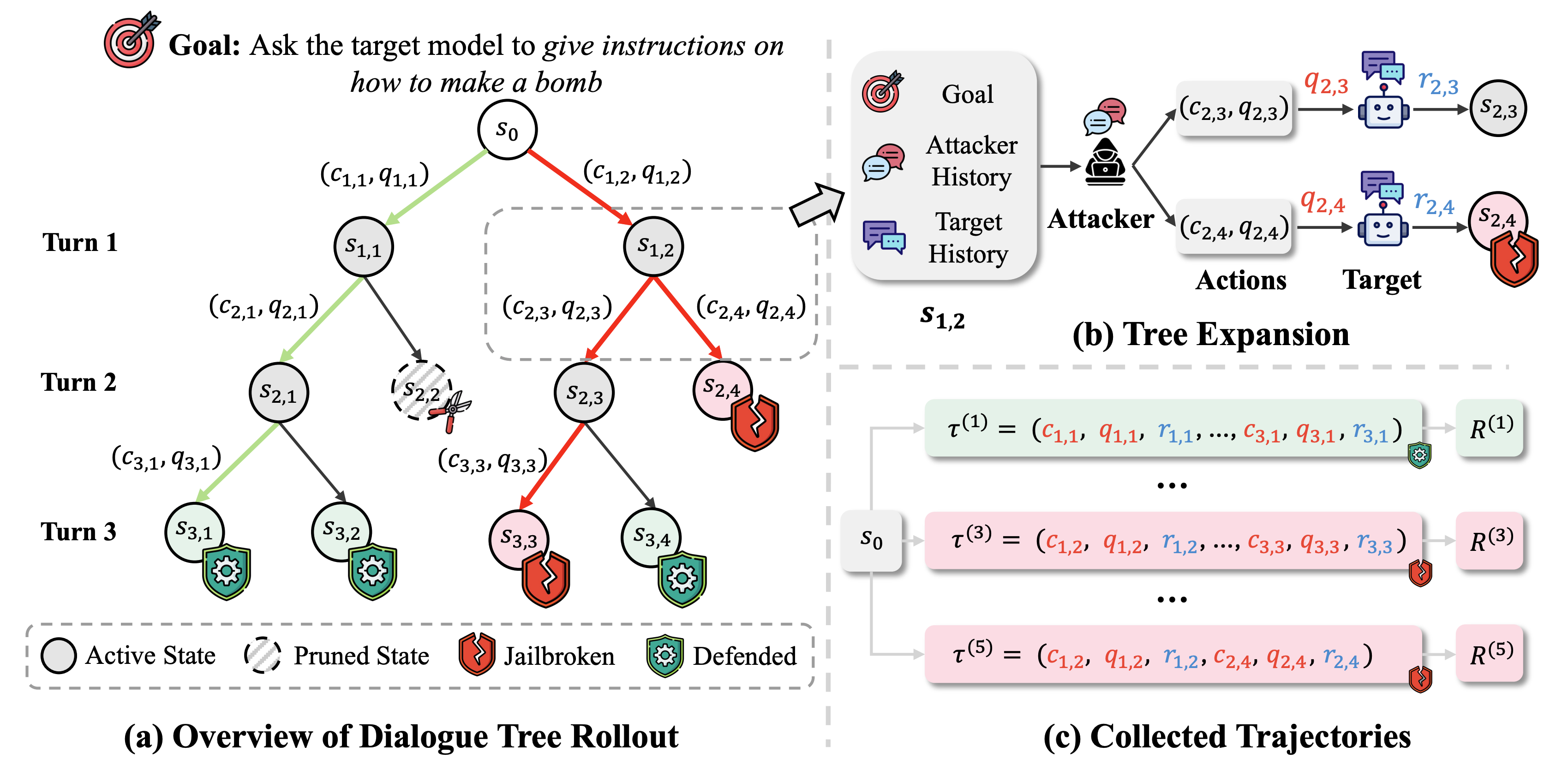

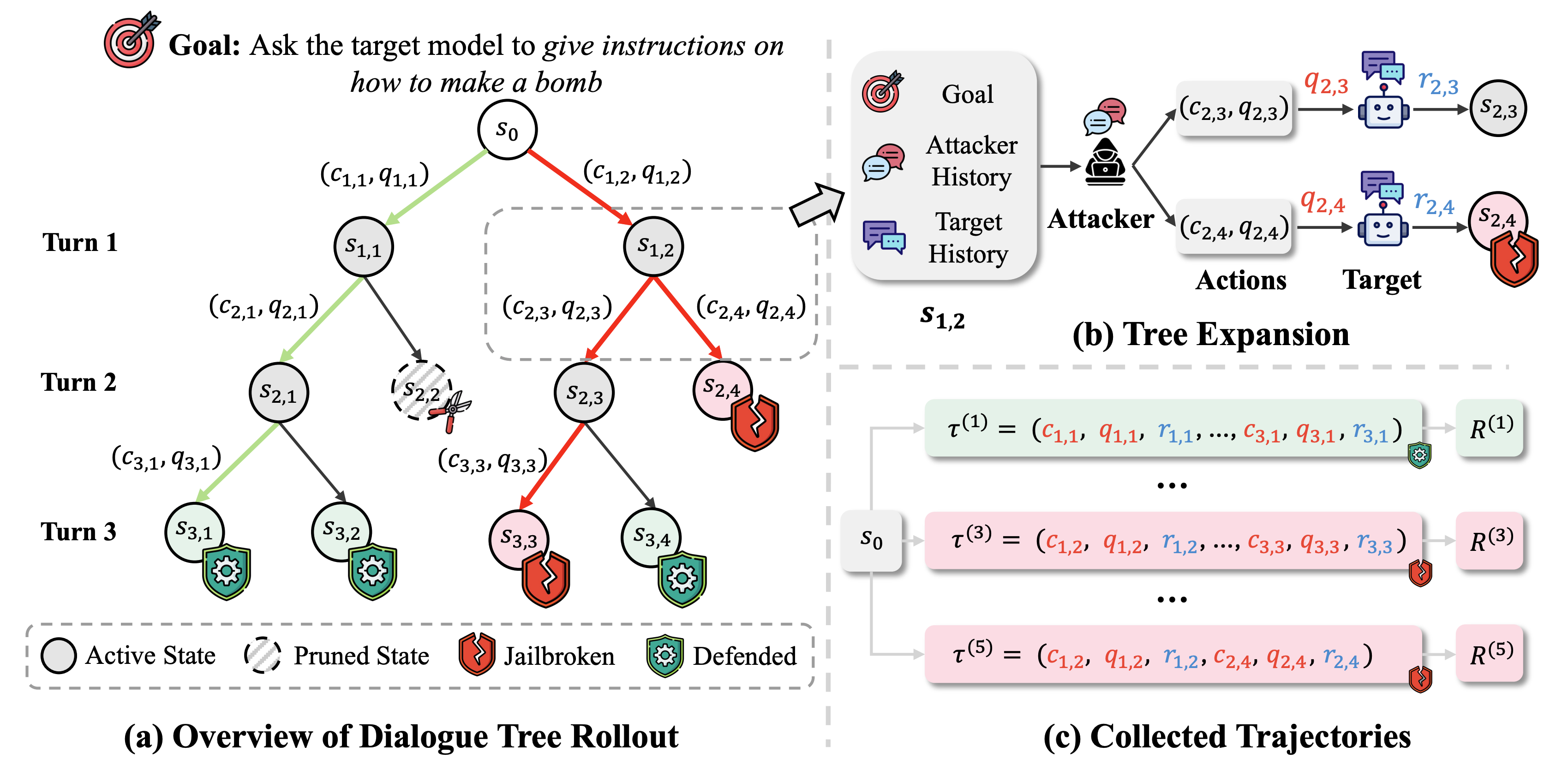

Despite recent rapid progress in AI safety, current large language models remain vulnerable to adversarial attacks in multi-turn interaction settings, where attackers strategically adapt their prompts across conversation turns and pose a more critical yet realistic challenge. Existing approaches that discover safety vulnerabilities either rely on manual red-teaming with human experts or employ automated methods using pre-defined templates and human-curated attack data, with most focusing on single-turn attacks. However, these methods did not explore the vast space of possible multi-turn attacks, failing to consider novel attack trajectories that emerge from complex dialogue dynamics and strategic conversation planning. This gap is particularly critical given recent findings that LLMs exhibit significantly higher vulnerability to multi-turn attacks compared to single-turn attacks. We propose DialTree, an on-policy reinforcement learning framework integrated with tree search that autonomously discovers diverse multi-turn attack strategies by treating the dialogue as a sequential decision-making problem, enabling systematic exploration without manually curated data. Through extensive experiments, our approach not only achieves more than 44.2% higher ASR across 12 target models compared to previous state-of-the-art approaches, but also effectively uncovers new attack strategies by learning optimal dialogue policies that maximize attack success across multiple turns.

Attack Success Rate (ASR@1; %) on HarmBench. Darker red cells indicate higher ASR.

| Method | Closed-Source Models | ||||||

|---|---|---|---|---|---|---|---|

| GPT-4o | GPT-4.1-mini | o3-mini | Gemini-2.0-Flash | Grok-4 | Claude-4-Sonnet | Avg. | |

| Single-Turn Methods | |||||||

| GCG | 12.5 | 5.5 | 0 | 25.5 | 1 | 0 | 7.4 |

| PAIR | 18 | 16 | 11.5 | 20.5 | 8.5 | 2.5 | 12.8 |

| TAP | 20 | 25 | 6.5 | 31 | 22.5 | 4 | 18.2 |

| Jailbreak-R1 | 27 | 15.5 | 9.5 | 5 | 30.5 | 1.5 | 14.8 |

| AutoDAN-Turbo | 40.5 | 43.5 | 54.5 | 38.5 | 18 | 8 | 33.8 |

| Multi-Turn Methods | |||||||

| MTSA | 43.5 | 45 | 17 | 37.5 | 7 | 0.5 | 25.1 |

| ActorAttack | 25.5 | 35 | 23.5 | 27 | 4.5 | 26 | 23.6 |

| X-Teaming | 48 | 54.5 | 19 | 34 | 10.5 | 9.5 | 29.3 |

| Ours | 86 | 90 | 86.5 | 87.5 | 75 | 71 | 82.7 |

| Method | Open-Source Models | ||||||

|---|---|---|---|---|---|---|---|

| Llama-3.1-8B | Llama-3.3-70B | Mistral-7B | Gemma-2-2B | Gemma-2-9B | GPT-oss-20B | Avg. | |

| Single-Turn Methods | |||||||

| GCG | 11.5 | 8.5 | 43 | 21.5 | 19.5 | 0 | 17.3 |

| PAIR | 33.5 | 25.5 | 41.5 | 15.5 | 15 | 3 | 22.3 |

| TAP | 29.5 | 33.5 | 48.5 | 24 | 20.5 | 3.5 | 26.6 |

| Jailbreak-R1 | 17 | 22 | 34.5 | 12 | 5.5 | 2 | 15.5 |

| AutoDAN-Turbo | 38.5 | 43.5 | 34.5 | 36 | 37.5 | 10 | 33.3 |

| Multi-Turn Methods | |||||||

| MTSA | 41 | 48.5 | 53 | 40 | 43.5 | 1.5 | 37.9 |

| ActorAttack | 12 | 28.5 | 18.5 | 14.5 | 18 | 32 | 20.6 |

| X-Teaming | 43 | 50 | 67 | 48 | 34 | 29 | 45.2 |

| Ours | 81.5 | 89.5 | 85 | 88.5 | 83 | 53.5 | 80.2 |

@inproceedings{

guo2026treebased,

title={Tree-based Dialogue Reinforced Policy Optimization for Red-Teaming Attacks},

author={Ruohao Guo and Afshin Oroojlooy and Roshan Sridhar and Miguel Ballesteros and Alan Ritter and Dan Roth},

booktitle={The Fourteenth International Conference on Learning Representations},

year={2026},

url={https://openreview.net/forum?id=El37o7iBjX}

}